A couple of years ago, I presented a project called ProcessingModule at the Chisel Community Conference. Its goal was to generate a simple CPU core given an instruction set architecture (ISA). The ISA would also be written in Chisel but in a format that is easily readable and writeable by those unfamiliar with the language. An example implementation called AdderModule was provided with a basic ISA that only adds integers and accesses memory. There is also a branch instruction that checks if a register value is greater than zero.

I had to crunch to get the first version ready for the conference, but it worked with a handful of unit test cases. Afterwards, I started thinking about how it could support a popular ISA like RISC-V and get through the standard RISC-V test suite. The biggest problem was the possibility of data hazards when nearby instructions have dependencies on each other. Fixing these proved to be challenging due to the fact that the code was a single large blob that was difficult to reason about. Pipeline stages were not clearly defined, so it was hard to figure out where hazards should be detected and how they should be handled.

So I decided to go back to the drawing board with the book Computer Architecture by David Money Harris and Sarah L. Harris. I closely studied the chapters on five-stage pipeline design and rewrote the code in a similar fashion. A fetch module accesses instruction memory, a decode module parses the instructions and accesses the register file, an execute module performs integer computations, and a memory module accesses data memory. The decode module also contains logic for detecting hazards and triggers a stall if one occurs. Instructions results are forwarded to the register file early when possible to reduce the frequency of stalls. The code for each of the pipeline stages is much smaller and simpler than the previous implementation, so they are easier to understand and debug. The stages can also be tested separately, making it possible write shorter unit tests that cover more scenarios.

In addition to the underlying implementation, the ISA interface has also changed. The biggest difference is that a register file is now part of the interface, though the register width and number of registers can still be customized. There are more generator methods in the interface to help the implementation identify data dependencies between instructions. The new interface is shown below:

abstract class InstructionLogic(val name : String) {

/** Number of operands */

val numOps : Int = 0

/** Indicates if the given word matches this instruction class */

def decode(instr : UInt) : Bool

/** Index in register file that specified operand is at */

def getRFIndex(instr : UInt, opIndex : Int) : UInt = 0.U

/** Indicates if this instruction is a branch */

def branch() : Bool = false.B

/** Indicates if this branch instruction is relative to PC */

def relativeBranch() : Bool = false.B

/** Returns instruction address or offset that should jump to */

def getBranchPC(instr : UInt, ops : Vec[UInt]) : SInt = 0.S

/** Indicates if this instruction reads from data memory */

def readMemory() : Bool = false.B

/** Indicates if this instruction writes to data memory */

def writeMemory() : Bool = false.B

/** Indicates if this instruction writes to the register file */

def writeRF() : Bool = false.B

/** Returns data address that should be accessed */

def getAddress(instr : UInt, ops : Vec[UInt]) : UInt = 0.U

/** Returns index in register file that should be written */

def getWriteIndex(intr : UInt, ops : Vec[UInt]) : UInt = 0.U

/** Returns data that should be written or stored */

def getData(instr : UInt, ops : Vec[UInt]) : UInt = 0.U

/** Returns memory data that should be written to register file

*

* The data returned by this logic is calculated in the memory

* stage using data just loaded from data memory

*/

def getRFWriteData(resultData : UInt, memData : UInt) : UInt = memData

}

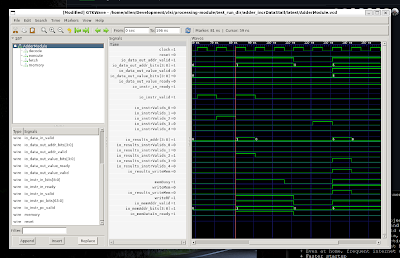

The unit testing strategy has also been changed in several ways. DecoupledTester described in the last post is not cycle-accurate: input and output events may happen in any cycle as long as the event order is consistent. I wanted more visibility and control over the exact timing of the pipeline, so I wrote a new test harness called DecoupledPeekPokeTester. It still accepts a sequence of high-level events (instruction/data request/receive), but an event's position in the sequence denotes the exact cycle that it is expected, as shown below:

AdderModule should "increment with memory value with stall" in {

executeTest("incrDataStall"){

(dut : AdderModule) => new DecoupledPeekPokeTester(dut){

def cycles = List(

List(new InstrReq(addr = 0)), // fetch 0

List(new InstrRcv(instr = incrData(0, 1)), new InstrReq(addr = 1)), // receive incr1, fetch 1

Nil, // decode incrData

List(new InstrRcv(instr = store(0, 6))), // execute incrData, receive store

Nil, // memory incrData, decode store

Nil, // stall on incrData

List(new LoadRcv(4), new LoadReq(addr = 1)), // memory incrData, execute store

Nil, // writeback incrData, memory store

Nil, // stall on store

Nil, // stall on store

Nil, // stall on store

Nil, // stall on store

List(new StoreReq(addr = 6, data = 4)), // writeback store to 6

Nil,

Nil

)

}

} should be (true)

}

With this harness, it is possible to verify that certain instruction sequences achieve an ideal cycles-per-instruction rate (CPI) of 1. DecoupledPeekPokeTester cases are also directly executed in Chisel with the treadle interpreter instead of generating Verilog code that must be compiled by Verilator which allows them to run much faster. Even with almost three times as many unit tests (24 vs. 9), the whole suite finishes in almost a third of the time compared to DecoupledTester (17 seconds vs. 52 seconds).

Now, the path to a RISC-V implementation is much clearer. The next steps are to convert the basic integer instruction set into the format required by ProcessingModule and run the standard instruction test suite. When that is done, then we can proceed to integrating the core into the Chipyard system and implementing it on an FPGA.